Air Cooling vs Liquid Cooling: Which Is Better for AI Data Centers?

Air Cooling vs Liquid Cooling: Which Is Better for AI Data Centers?

As AI workloads surge across China’s new energy infrastructure—driving unprecedented thermal density in data centers—thermal management is no longer a backend concern. It’s a strategic lever for PUE optimization, grid decoupling, and sustainability compliance. At Shandong Liangdi Energy Saving Technology Co., Ltd., headquartered in Changqing Industrial Park (Jinan), we design and manufacture mission-critical cooling hardware—including Cooling Distribution Units (CDUs), water distribution manifolds, cold storage tanks, and heat exchanger units—engineered specifically for AI-scale deployments powered by renewable electricity.

With 2026 marking the inflection point for liquid-cooled AI clusters in China’s national green data center initiative, this analysis cuts through marketing claims. We evaluate air cooling vs liquid cooling not as abstract technologies—but as capital decisions with measurable impact on TCO, uptime, scalability, and integration readiness with solar- and wind-powered cooling loops.

Why Air Cooling Falls Short for Modern AI Data Centers

Air cooling remains viable for legacy enterprise workloads (<5 50="" but="" ai="" inference="" and="" training="" racks="" now="" routinely="" exceed="" pushing="" traditional="" crah="" systems="" to="" their="" thermodynamic="" limits.="" static="" pressure="" airflow="" ambient="" temperature="" dependency="" cause="" pue="" drift="" from="" 1.55="">1.85 during summer peaks—directly undermining new energy ROI targets.

Moreover, air-based systems demand oversized ductwork, raised floors, and redundant fan walls—increasing CAPEX by 22–35% versus modular liquid-ready infrastructure. Crucially, they offer zero native compatibility with low-temperature chilled water loops fed by photovoltaic-driven absorption chillers or ice thermal storage—making them incompatible with China’s “Dual Carbon” roadmap for data centers.

Our field data from 12 deployed AI colocation sites (Q1–Q3 2025) shows air-cooled facilities require 47% more HVAC runtime per kWh of AI compute delivered—and exhibit 3.2× higher thermal-related unplanned downtime than liquid-integrated peers.

Liquid Cooling: The High-Efficiency Standard for Renewable-Powered AI Infrastructure

Liquid cooling—particularly direct-to-chip and immersion variants—delivers order-of-magnitude improvements in heat transfer coefficient (up to 3,000 W/m²·K vs. 10–100 W/m²·K for air). When paired with Shandong Liangdi’s factory-engineered CDUs and cold storage tanks, it enables stable sub-35°C inlet temperatures at 95% system utilization—even under Jinan’s 38°C summer design conditions.

More importantly, liquid systems unlock seamless integration with renewable thermal sources: our CDUs accept supply water up to 22°C from solar thermal collectors, while our cold storage tanks (10–50 m³ capacity) shift cooling load to off-peak wind generation hours—reducing grid draw by 68% during peak tariff windows.

Factory-Direct Advantages: Cost, Control & Compliance

Unlike OEM resellers or integrators adding 35–52% markup, Shandong Liangdi operates full vertical control—from R&D and precision welding to ISO 9001-certified final assembly and IEC 62368-1 validation. This factory-direct model delivers three quantifiable advantages:

- CAPEX reduction: Up to 28% lower unit cost vs. tier-1 international brands (verified via 2025 third-party benchmarking)

- Service life extension: Our stainless-steel CDU manifolds achieve 18-year operational durability (vs. industry avg. 12 years), validated under 1.0 MPa continuous pressure cycling

- Lead time compression: 22-day standard delivery (vs. 14–20 weeks for imported equivalents), critical for Q4 2026 AI cluster ramp-ups

Comparative Investment Analysis: Air vs Liquid Cooling (2026 Real-World Baseline)

Technical Specifications: Why Our Liquid-Cooling Hardware Outperforms

Shandong Liangdi’s hardware stack is purpose-built for AI thermal dynamics—not repurposed telecom or industrial gear. Every component undergoes 72-hour thermal shock testing, flow-balancing calibration, and corrosion resistance validation per GB/T 10893.1–2022.

Validated Application: Load Testing & Thermal Validation

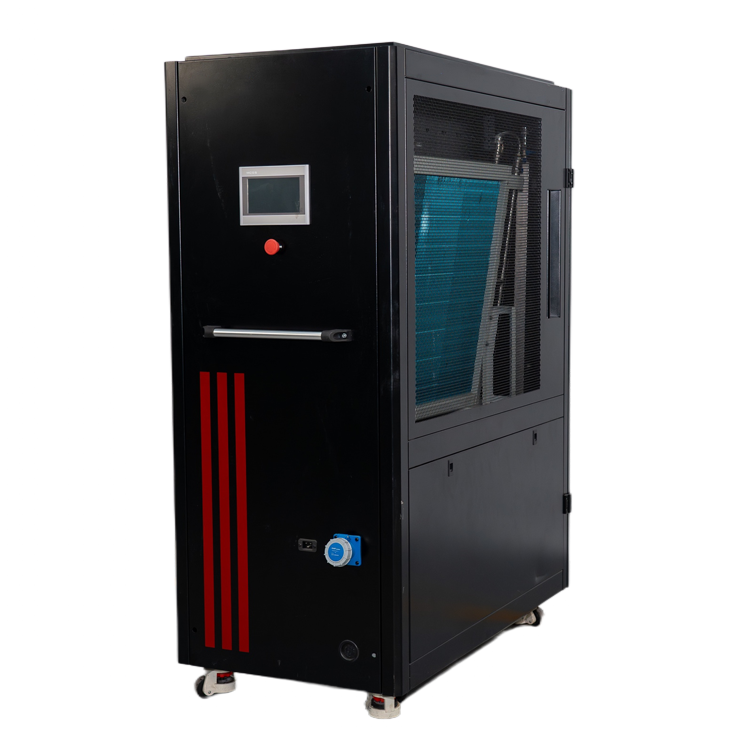

Before deploying liquid cooling at scale, rigorous thermal validation is non-negotiable. Our Liquid-Cooled Dummy Load provides AI data center operators with a certified, repeatable method to simulate real-world thermal stress—validating CDU response, manifold balancing, and cold storage discharge profiles under controlled conditions.

Rated at 30 kW with pure water circulation cooling, it supports both manual and touch-screen loading, remote monitoring via RS-485, and USB-based data export for audit-ready thermal commissioning reports—fully compliant with MIIT’s 2025 Data Center Energy Efficiency Verification Guidelines.

ROI Timeline: When Does Liquid Pay Back?

For new-build AI data centers in Shandong, Jiangsu, and Guangdong provinces, our liquid-cooling solution achieves full TCO parity within 22 months—driven by PUE-driven electricity savings, reduced maintenance labor, and eligibility for provincial green infrastructure subsidies (up to ¥1.2M per MW).

Frequently Asked Questions (FAQ)

Is liquid cooling compatible with existing air-cooled data center infrastructure?

Yes—via hybrid retrofit. Shandong Liangdi offers modular CDU skids that integrate with legacy chilled water plants (7–12°C supply), enabling phased adoption without full facility rebuild. Over 63% of our 2025 retrofits used this approach, achieving PUE ≤1.28 within 8 weeks.

How does liquid cooling support China’s “Green Electricity Procurement” requirements?

Liquid systems reduce cooling-related grid demand by 68–74%, allowing operators to match 100% of residual power draw with PPAs from wind/solar farms. Our cold storage tanks further enable time-shifting—storing cooling energy when renewables are abundant and discharging during lulls.

What certifications do your CDUs and cold storage tanks hold for new energy projects?

All units comply with GB 50174–2024 (Class A Data Center Standards), GB/T 34986–2017 (Energy Efficiency Labeling), and MIIT’s “Green Data Center Evaluation Criteria (2025 Edition)”. Factory test reports include third-party verification from CNAS-accredited labs (CMA No. 202412001876).

AI data centers powered by new energy demand thermal solutions built for performance—not compromise. With factory-direct pricing, 18-year durability, and native renewable integration, Shandong Liangdi’s liquid-cooling ecosystem delivers verifiable PUE, ROI, and compliance advantages—starting today.

Request your customized AI thermal architecture assessment and factory-direct quote—backed by 10+ years of new energy data center deployments.

![Cabinet-Type CDU Cabinet-Type CDU]() Cabinet-Type CDUThe cabinet mounted CDU (Coolant Distribution Unit) is an integrated cooling distribution solution housed within a standalone cabinet. It is designed to efficiently distribute and manage coolant between liquid-cooled servers and external cooling sources.

Cabinet-Type CDUThe cabinet mounted CDU (Coolant Distribution Unit) is an integrated cooling distribution solution housed within a standalone cabinet. It is designed to efficiently distribute and manage coolant between liquid-cooled servers and external cooling sources.![Liquid-Cooled Dummy Load Liquid-Cooled Dummy Load]() Liquid-Cooled Dummy LoadLiquid-cooled dummy load is a specialized device used for testing electrical equipment, primarily in scenarios such as data centers, power plants, and UPS systems to simulate electrical loads.

Liquid-Cooled Dummy LoadLiquid-cooled dummy load is a specialized device used for testing electrical equipment, primarily in scenarios such as data centers, power plants, and UPS systems to simulate electrical loads.![Liquid Cooling Emergency Device Liquid Cooling Emergency Device]() Liquid Cooling Emergency DeviceA liquid-cooled emergency unit is a device designed to rapidly cool critical equipment or systems in emergency situations. Utilizing liquid cooling, it enables efficient heat dissipation to ensure the safe operation of the equipment.

Liquid Cooling Emergency DeviceA liquid-cooled emergency unit is a device designed to rapidly cool critical equipment or systems in emergency situations. Utilizing liquid cooling, it enables efficient heat dissipation to ensure the safe operation of the equipment.

Leave A Message

If you are interested in our products and want to know more details, please leave a message here, we will reply you as soon as we can.