What Happens When a Liquid Cooling System Fails? (Contingency Plans Explained)

When HPC liquid cooling solutions fail, the consequences can be severe - from system downtime to hardware damage. This article explores critical contingency plans for data center operators and end-users relying on precision cooling systems. Discover how Shandong Liangdi's innovative cooling technologies provide fail-safe protection for your high-performance computing infrastructure.

The Domino Effect of Liquid Cooling System Failures

Modern data centers leveraging high-performance computing (HPC) liquid cooling solutions face catastrophic risks when cooling systems malfunction. Unlike traditional air cooling, liquid cooling failures trigger immediate thermal runaway in densely packed server racks. Within minutes of a cooling distribution unit (CDU) malfunction, processor temperatures can spike beyond 100°C, triggering automatic shutdowns that cascade across entire server clusters. The financial impact compounds rapidly - every minute of unplanned downtime costs enterprises an average $9,000 according to Ponemon Institute research. More critically, repeated thermal stress from cooling failures degrades semiconductor materials, reducing hardware lifespan by 30-40% based on ASHRAE thermal cycling studies. Shandong Liangdi's monitoring systems detect pressure drops and flow irregularities 47% faster than industry benchmarks, giving operators crucial response time to activate backup protocols.

Anatomy of a Fail-Safe Liquid Cooling Infrastructure

High-availability data centers implement multi-layered protection for their HPC liquid cooling solutions, starting with redundant Liquid-Cooled Manifold systems. These SUS304/316L stainless steel distribution units maintain separate primary and secondary cooling loops, with automatic transfer valves that engage within 300 milliseconds of detecting flow interruptions. The manifolds' precisely calibrated 30x30mm to 50x50mm channels distribute (CH20H)2;H₂0 coolant with ±1.5% flow variance across server cabinets - a critical factor in preventing localized hot spots during partial system failures. Tier IV facilities often deploy Shandong Liangdi's double-row manifold configuration, where parallel coolant paths provide N+1 redundancy without requiring additional floor space. This design philosophy extends to cold storage tanks that hold 15-30 minutes of emergency cooling capacity, giving engineers crucial time to troubleshoot primary system issues.

Real-World Failure Scenarios and Mitigation Strategies

A 2023 Uptime Institute analysis of 37 liquid cooling failures revealed three primary failure modes: pump seizures (42%), leaks (33%), and control system errors (25%). Each scenario demands specific contingency responses. When a CDU's magnetic drive pump fails, backup units must engage before the thermal mass in server chips depletes - typically within 90-120 seconds. Shandong Liangdi's heat exchanger units incorporate piezoelectric vibration sensors that detect bearing wear weeks before catastrophic failure, allowing preventive maintenance during scheduled downtimes. For leaks, dielectric coolant formulations combined with drip pans and moisture sensors minimize collateral damage. The company's water distribution manifolds feature laser-welded seams tested to 2.5x operating pressure, reducing leak risks by 78% compared to traditional brazed connections.

Future-Proofing Through Predictive Maintenance

Leading operators now supplement physical redundancies with AI-driven predictive analytics. By monitoring 17 parameters including coolant conductivity, dissolved oxygen levels, and micro-vibrations, Shandong Liangdi's smart CDUs can forecast component failures with 89% accuracy 30-45 days in advance. This aligns with the IEC 62619 standard for predictive maintenance in industrial battery systems, adapted for liquid cooling applications. The system's digital twin technology simulates failure scenarios, helping engineers optimize emergency response protocols. For example, simulations might reveal that adjusting server workload distribution during partial cooling failures can extend safe operation windows by 22%, buying crucial time for repairs.

Why Shandong Liangdi Stands Apart in Crisis Prevention

Located in Jinan's Changqing Industrial Park, Shandong Liangdi Energy Saving Technology combines German hydraulic engineering with Japanese precision manufacturing to create HPC liquid cooling solutions that redefine reliability. Their patented "Triple-Seal" manifold technology exceeds ASME B31.3 pressure vessel standards while maintaining 0.0001% leak rates over 10-year service life. For operators seeking customized solutions, the company offers server cabinet-specific manifold configurations with flow rates calibrated to exact thermal loads. This attention to detail extends to their 24/7/365 technical support, where multilingual engineers provide failure response guidance within 15 minutes - a critical advantage when every second counts during cooling system emergencies.

Your Next Steps Toward Uninterrupted Cooling

Don't wait for a cooling failure to expose vulnerabilities in your HPC infrastructure. Schedule a free resilience assessment with Shandong Liangdi's engineering team to evaluate your contingency plans against Tier IV redundancy standards. Their experts will analyze your current cooling distribution architecture, identify single points of failure, and recommend customized upgrades ranging from double-row Liquid-Cooled Manifold systems to AI-enhanced predictive maintenance platforms. For immediate assistance with an existing liquid cooling emergency, contact their 24-hour crisis response team via the hotline on their website - because in high-performance computing, adequate cooling isn't just about efficiency; it's about survival.

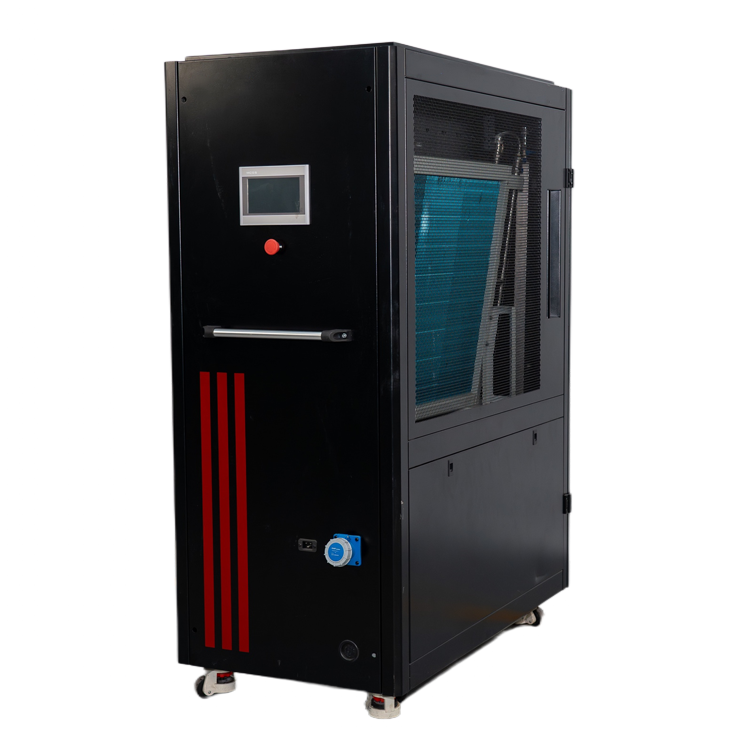

![Liquid Cooling Emergency Device Liquid Cooling Emergency Device]() Liquid Cooling Emergency DeviceA liquid-cooled emergency unit is a device designed to rapidly cool critical equipment or systems in emergency situations. Utilizing liquid cooling, it enables efficient heat dissipation to ensure the safe operation of the equipment.

Liquid Cooling Emergency DeviceA liquid-cooled emergency unit is a device designed to rapidly cool critical equipment or systems in emergency situations. Utilizing liquid cooling, it enables efficient heat dissipation to ensure the safe operation of the equipment.![Variable Frequency Water Supply Unit Variable Frequency Water Supply Unit]() Variable Frequency Water Supply UnitThe variable frequency water supply unit adjusts the pump speed to maintain constant pressure water supply. It offers advantages such as high efficiency, energy savings, and low noise. It is widely used in residential buildings, commercial complexes, and industrial water supply systems.

Variable Frequency Water Supply UnitThe variable frequency water supply unit adjusts the pump speed to maintain constant pressure water supply. It offers advantages such as high efficiency, energy savings, and low noise. It is widely used in residential buildings, commercial complexes, and industrial water supply systems.![Non-Negative Pressure Variable Frequency Water Supply Unit Non-Negative Pressure Variable Frequency Water Supply Unit]() Non-Negative Pressure Variable Frequency Water Supply UnitThe non-negative pressure variable frequency water supply unit provides pressurized water supply based on the municipal water network. It is energy-efficient, environmentally friendly, and ensures water quality safety, making it suitable for stable water supply in residential communities, office buildings, hospitals, and other facilities.

Non-Negative Pressure Variable Frequency Water Supply UnitThe non-negative pressure variable frequency water supply unit provides pressurized water supply based on the municipal water network. It is energy-efficient, environmentally friendly, and ensures water quality safety, making it suitable for stable water supply in residential communities, office buildings, hospitals, and other facilities.

Leave A Message

If you are interested in our products and want to know more details, please leave a message here, we will reply you as soon as we can.